Are Indian institutions as poor as the QS and Times global university ratings suggest?

Quacquarelli Symonds (QS) and Times Higher Education (THE) recently released their 2013 global university rankings. In both sets of rankings, institutions from India are nowhere in the picture. In fact, their ranks have fallen compared to last year.

World University Rankings: Panjab University is top Indian institute, no IITs in top 200

Are institutions from India that bad? Have institutions from India been ?lazy? in providing the right data? To show that there are problems with the rankings, I analyse the parameters of the IIT-Guwahati data (since these are available to me, but the results can be easily generalised to other IITs; IIT-Delhi and Panjab University data are also shown).

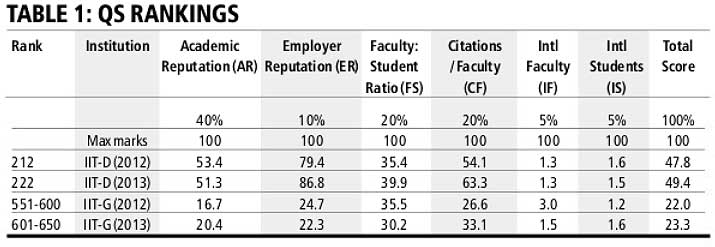

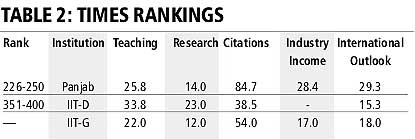

The first table is for QS, and the second for THE. All scores are relative, with the top-ranked institution in each category getting a score of 100.

One, the faculty-student ratio of IIT-G is the best among the IITs, but it is showing a decline and is much worse than IIT-D?s in the QS table. Clearly, there is an error here. Two, the IITs are not allowed to take international students at the BTech level. There is scope for increasing the number of foreign PhD students. But even here there is a restriction, as government assistantships can only be given to Indian citizens. Without aid, it is difficult to attract good international PhD students. Hiring international faculty on a regular basis is not allowed. They can be hired on contract for up to five years, but only if the salary is at least $25,000 annually (so, effectively, only professors are allowed). The question remains: is the internationalisation of campuses an important parameter for excellence? Western countries have a clear advantage.

Related: Behind Panjab University’s world ranking, push by ex-student PM Manmohan Singh

Three, 50 per cent of the weightage is based on ?reputation? (AR: 40 per cent and ER: 10 per cent) in the QS rankings, and 33 per cent in THE (not shown in table). IIT-G got a score of 0 for academic reputation and a score of 1 in research reputation in THE. This is reflected in the scores for teaching and research. These organisations are now aggressively marketing their products through which institutions can enhance their ?reputation?. Thus, we have been invited to advertise in their QS Top University Guide 2013 (with discounts if we opt to advertise in more than one language) and in other publications, to attend seminars and conferences (with registration fees of course), and so on. Can we rely primarily on reputations to decide ranks? Academics all over the world are asked their opinion of the top institutions globally and in their country. The chances of getting an IIT?s name included by a US professor are quite slim. The number of respondents is proportional to the number of institutes available for selection in that country. So the responses are heavily weighted in favour of developed countries. Respondents are not asked to give their inputs for each of the listed universities (it may be impractical to do so, as there is a large number of them). Instead, each respondent is asked to give a list of 5-10 universities he or she thinks are globally well known, and well known in their country. This method perpetuates the existing ranks.

Four, consider the categories CF and citations. The total number of citations in the last five years is divided by the number of faculty in the last year by QS. IIT-G had 323 faculty members in 2013, but only 220 in 2009. So its numbers clearly cannot be compared with institutions like Cambridge and Oxford, where the faculty numbers are almost constant. Further, since a five-year average is taken, one or two ?star? papers can make a huge difference to the numbers. For example, a review paper ?The Hallmarks of Cancer? authored by two professors from the University of California, San Francisco, and the Massachusetts Institute of Technology, has about 10,000 citations. This paper alone will have boosted the CF figure of both these institutions significantly. THE uses a different method for citations and probably does not remove self-citations. The high scores of Panjab and IIT-G vis-?-vis IIT-D could be explained by this. Panjab University?s high energy physics group (and to a lesser extent IIT-G?s) is part of global experiments at CERN and Fermi Labs, and papers from that project have very high citations. Thus, a small of group of international collaborations are providing a high score. Isn?t the median number of citations per faculty a better measure than the average (there are other issues, for example, citations in the sciences are usually much more than in engineering)?

So, what can we conclude from all of the above? Surely, it should be clear that the ranking of universities is not a simple task. We have only scratched the surface, as have QS and THE. There are so many other aspects of an educational institution that they have not even touched upon. Many of these aspects are qualitative in nature, and it is difficult to quantify them. This is not to say that Indian universities do not need to improve their rankings. They do, and to begin with, we will have to provide data to these organisations in the format they expect. Interactions are already on. But if we want Indian institutions to get appreciably higher QS and THE rankings, we must allow the institutions to do the following: a) spend heavily to aggressively market the institute among academia and corporations in the US and Europe; b) substantially increase the number of foreign students. The government must allow undergraduate admissions, allow assistantships for foreigners and remove ceilings on incomes for foreign faculty; c) hire a large number of temporary ?teachers? to boost the faculty-student ratio (which counts the number of ?academic staff?, and which apparently is done by many US universities); and d) create a network among Indian institutions to encourage citations of papers of other Indian institutions, that is, scratch each others? backs. Finally, of course, all institutions must strive to improve the quality and quantity of research, teaching, industry interaction, etc.

The writer, former director of IIT-Guwahati, is mentor-director of the newly established IIIT-Guwahati